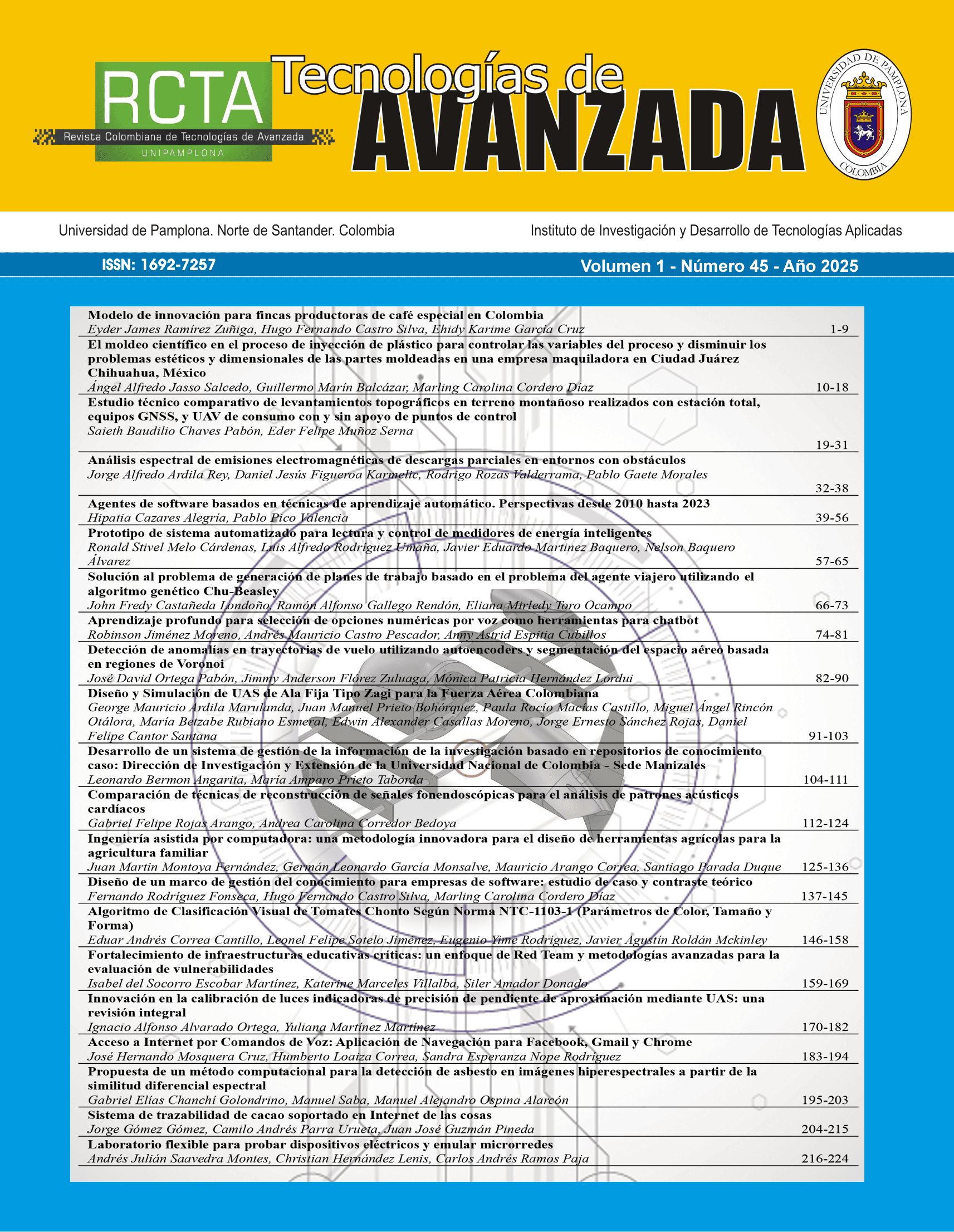

Aprendizaje profundo para selección de opciones numéricas por voz como herramientas para chatbot

DOI:

https://doi.org/10.24054/rcta.v1i45.3044Palabras clave:

Aprendizaje profundo, Inteligencia artificial, Robótica, Aplicación, ChatbotResumen

Este documento presenta el diseño de un asistente tipo chatbot operado por voz que funciona siguiendo un modelo de dialogo entre usuario y robot, el cual es entrenado con algoritmos de aprendizaje profundo usando una base de datos de espectrogramas, construidos a partir de voces tanto masculinas como femeninas, basados en la transformada de Fourier de corto tiempo y los coeficientes cepstrales de frecuencia Mel como técnicas de preprocesamiento de señales. Para el reconocimiento y clasificación de patrones de voz se diseñan cinco arquitecturas de red convolucional con los mismos parámetros. Se compara el desempeño en el entrenamiento de las redes donde todas obtuvieron grados de exactitud superior al 92.8%, se observa que el número de capas de las redes afecta el número de parámetros de aprendizaje, su grado de exactitud y peso digital, en general mayor cantidad de capas incrementa tanto el tiempo de entrenamiento como el tiempo de clasificación. Finalmente, para su validación mediante un App de chatbot, el diseño de la red seleccionada es aplicado al diligenciamiento de una encuesta que usa una escala de Likert de 1 a 5, en donde los usuarios además de decir la opción seleccionada la confirman con un Sí o un No, la App reproduce el audio de cada pregunta, muestra su identificación, escucha y confirma las respuestas del usuario. Se concluye el diseño de red seleccionado permite desarrollar aplicaciones de chatbot basadas en interacción por audio.

Descargas

Referencias

P. Rashmi and M. P. Singh, "Convolution neural networks with hybrid feature extraction methods for classification of voice sound signals," World Journal of Advanced Engineering Technology and Sciences, vol. 8, no. 2, pp. 110-125, doi: 10.30574/wjaets.2023.8.2.0083, 2023.

S. A. El-Moneim, M. A. Nassar and M. Dessouky, "Cancellable template generation for speaker recognition based on spectrogram patch selection and deep convolutional neural networks," International Journal of Speech Technology, vol. 25, no. 3, pp. 689-696, doi: 10.1007/s10772-020-09791-y, 2022.

P. H. Chandankhede, A. S. Titarmare and S. Chauhvan, "Voice recognition based security system using convolutional neural network," in 2021 International Conference on Computing, Communication and Intelligent Systems (ICCCIS), 2021.

O. Cetin, "Accent Recognition Using a Spectrogram Image Feature-Based Convolutional Neural Network," Arabian Journal for Science and Engineering, vol. 48, no. 2, pp. 1973-1990, doi: 10.1109/SLT.2018.8639622, 2023.

A. Soliman, S. Mohamed and I. A. Abdelrahman, "Isolated word speech recognition using convolutional neural network," in 2020 international conference on computer, control, electrical and electronics engineering (ICCCEEE), 2021.

A. Alsobhani, A. H. M. and H. Mahdi, "Speech recognition using convolution deep neural networks," in Journal of Physics: Conference Series, 2021.

J. Li, L. Han, X. Li, J. Zhu, B. Yuan and Z. Gou, "An evaluation of deep neural network models for music classification using spectrograms," Multimedia Tools and Applications, vol. 81, pp. 4621- 4627, doi: 10.1007/s11042-020-10465-9, 2022.

V. Gupta, S. Juyal and Y. C. Hu, "Understanding human emotions through speech spectrograms using deep neural network," The Journal of Supercomputing, vol. 78, no. 5, pp. 6944-6973, doi: 10.1007/s11227-021-04124-5, 2022.

D. Issa, M. F. Demirci and A. Yazici, "Speech emotion recognition with deep convolutional neural networks," Biomedical Signal Processing and Control, vol. 59, pp. 101894, doi: 10.1016/j.bspc.2020.101894, 2020.

K. Bhangale and K. Mohanaprasad, "Speech emotion recognition using mel frequency log spectrogram and deep convolutional neural network," in International Conference on Futuristic Communication and Network Technologies, Singapore.

A. Iyer, A. Kemp, Y. Rahmatallah, L. Pillai, A. Glover, F. Prior, L. Larson-Prior and T. Virmani, "A machine learning method to process voice samples for identification of Parkinson’s disease," Scientific Reports, vol. 13, pp. 20615, doi: 10.1038/s41598-023-47568-w, 2023.

M. A. Mohammed, K. H. Abdulkareem, S. A. Mostafa, M. Khanapi Abd Ghani, M. S. Maashi, B. Garcia-Zapirain and F. T. Al-Dhief, "Voice pathology detection and classification using convolutional neural network model," Applied Sciences , vol. 10, no. 11, pp. 3723, doi: 10.3390/app10113723, 2020.

L. Vavrek, M. Hires, D. Kumar and P. Drotár, "Deep convolutional neural network for detection of pathological speech," in IEEE 19th world symposium on applied machine intelligence and informatics (SAMI), 2021.

A. Tursunov, Mustaqeem, J. Y. Choeh and S. Kwon, "Age and gender recognition using a convolutional neural network with a specially designed multi-attention module through speech spectrograms," Sensors, vol. 21, no. 17, p. 5892, 2021.

C. Cheng, K.-L. Lay, H. Yung-Fong and T. Yi-Miau , "Can Likert scales predict choices? Testing the congruence between using Likert scale and comparative judgment on measuring attribution," Methods in Psychology, vol. 5, pp. 100081,doi: 10.3390/ai5030048., 2021.

R. Liu, G. Yibei , J. Runxiang and Z. Xiaoli , "A Review of Natural-Language-Instructed Robot Execution Systems," AI 5, no. 3, pp. 948-989, doi: 10.1016/j.metip.2021.100081., 2024.

A. Koshy and S. Tavakoli, "Exploring British Accents: Modelling the Trap–Bath Split with Functional Data Analysis," Journal of the Royal Statistical Society Series C: Applied Statistics, vol. 71, pp. 773–805, doi: 10.1111/rssc.12555, 2022.

M. M. Kabir, M. F. Mridha, J. Shin, I. Jahan and A. Q. Ohi, "A Survey of Speaker Recognition: Fundamental Theories, Recognition Methods and Opportunities," IEEE Access, vol. 9, pp. 79236-79263, doi: 10.1109/ACCESS.2021.3084299, 2021.

Q. Kong, Y. Cao, T. Iqbal, Y. Wang, W. Wang and M. D. Plumbley, "PANNs: Large-Scale Pretrained Audio Neural Networks for Audio Pattern Recognition," IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 28, pp. 2880-2894, doi: 10.1109/TASLP.2020.3030497, 2020,.

J. Martinsson and M. Sandsten, "DMEL: The Differentiable Log-Mel Spectrogram as a Trainable Layer in Neural Networks," in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Korea, 2024.

J. Ancilin and A. Milton, "Improved speech emotion recognition with Mel frequency magnitude coefficient," Applied Acoustics, vol. 179, p. doi.org/10.1016/j.apacoust.2021.108046, 2021.

M. Samaneh, C. Talen, A. Olayinka, T. John Michael, P. Christian, P. Dave and S. Sandra L, "Speech emotion recognition using machine learning — A systematic review," Intelligent Systems with Applications, vol. 20, p. doi.org/10.1016/j.iswa.2023.200266, 2023.

A. Yenni, H. Risanuri and B. Agus, "A Mel-weighted Spectrogram Feature Extraction for Improved Speaker," International Journal of Intelligent Engineering and Systems, vol. 15, no. 6, p. 74–82 DOI: 10.22266/ijies2022.1231.08, 2022.

Descargas

Publicado

Número

Sección

Licencia

Derechos de autor 2025 Robinson Jiménez Moreno, Andrés Mauricio Castro Pescador, Anny Astrid Espitia Cubillos

Esta obra está bajo una licencia internacional Creative Commons Atribución-NoComercial 4.0.